- The AI Timeline

- Posts

- LeWorldModel: JEPA but more practical

LeWorldModel: JEPA but more practical

plus more on Claudini, Composer 2, and self-distillation

Mar 24th ~ Mar 31th

#101 Latest AI Research Explained Simply

🗞️ Industry News in 1 Line

♥ 5.5k GLM-5.1 by z.ai is now officially available to all GLM Coding Plan users. To start using the new model, simply update your configuration file, such as

~/.claude/settings.json, by manually changing the model name to "glm-5.1." If you look at the benchmarks, it doesn’t look that impressive, but what’s great is that it offers 3× usage of the Claude Pro plan.

♥ 2.2k Meta has released SAM 3.1, a drop-in update that introduces "object multiplexing" to track up to 16 objects simultaneously in a single forward pass. This update doubles video processing throughput and removes memory bottlenecks, making high-performance AI applications feasible on smaller, more accessible hardware. View on GitHub.

♥ 15k Meta has introduced TRIBE v2, a new foundation model trained on over 500 hours of fMRI data to predict how the human brain responds to visual and auditory stimuli. This "digital twin" of neural activity achieves a nearly 3x improvement in zero-shot predictions over previous methods. View on GitHub.

♥ 38k Google has released TurboQuant, which is a compression algorithm designed to optimize the key-value (KV) cache of Large Language Models. This new method reduces memory requirements by at least sixfold, allowing developers to run massive models on significantly more modest hardware. Moreover, it also delivers up to an 8x speedup in inference. Most importantly, TurboQuant achieves these performance gains with zero loss in accuracy, ensuring that model quality remains untouched despite the extreme compression.

TurboQuant demonstrates robust KV cache compression performance across the LongBench benchmark relative to various compression methods

The hype for Google’s TurboQuant is facing pushback from the research community as the paper contains serious technical inaccuracies and misleading comparisons. Lead researchers from the RaBitQ project claim that TurboQuant misrepresents their methodology and fails to acknowledge fundamental similarities, specifically regarding the use of the Johnson-Lindenstrauss transform. According to public statements, these flaws were flagged to the authors prior to submission, yet the paper was allegedly published and promoted without the necessary corrections.

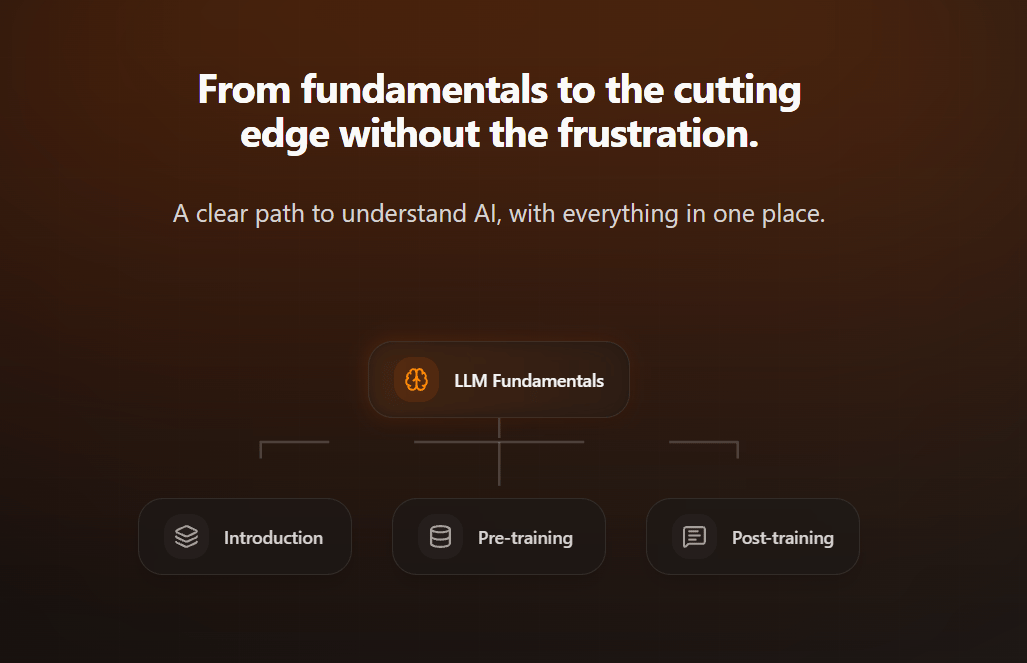

Intuitive AI Academy - NEW Distillation Chapter!

My latest project: Intuitive AI Academy has the perfect starting point for you! We focus on building your intuition to understand LLMs, from transformer components, to post-training logic. All in one place.

We currently have an early bird offer, where you would get 40% off on the yearly plan for our early users.

Use code: TIMELINE

Composer 2 Technical Report

Cursor Research Team

♥ 5.3k Coding

When developers ask AI models to write code, the models perform brilliantly on laboratory tests but stumble in the messy, ambiguous world of actual software engineering. Researchers realized that existing AI coding benchmarks were simply too neat, providing detailed instructions for isolated bugs. Developers constantly deal with vague bug reports, massive codebases, and confusing production logs. To bridge this gap, scientists set out to build a specialized assistant that genuinely thinks like a seasoned engineer navigating the authentic friction of daily development.

Overview of a single grouped GEMM training flow in our Mixture-of-Experts layer.

This paper introduces Composer 2, which is a new model that dramatically improves how AI handles long-term programming tasks. The researchers achieved this through a clever two-step training process.

Example CursorBench task

First, they immersed the model in massive amounts of code to build profound baseline knowledge. Then, they put it through rigorous reinforcement learning, simulating actual user sessions where the AI practiced solving diverse problems. To help the model stay perfectly focused during lengthy programming tasks, the team introduced a brilliant self-summarization technique.

Instead of getting overwhelmed by a long history of commands, the model constantly writes little internal summaries for itself. This preserves crucial context while discarding clutter.

To prove this approach works, the team bypassed traditional public tests and created a rigorous new evaluation based entirely on authentic engineering scenarios. In these demanding simulations, which require extensive code changes from incredibly brief instructions, the model demonstrated remarkable accuracy and coherence.

Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLMs

Panfilov et al. [MATS, ELLIS Institute Tubingen & Max Planck Institute for Intelligent Systems, Tubingen AI Center, Imperial College London]

♥ 1.5k Coding Vulnerabilities bycloud’s pick

Can AI automatically conduct research to make future technology safer? Researchers built an automated security researcher to see if an AI could invent entirely new methods for pressure-testing digital vulnerabilities.

To test this, the research team built a continuous loop where the AI reviewed dozens of existing security-testing algorithms, wrote new software to improve them, and then evaluated its own creations. Instead of simply generating tricky text prompts to bypass safety filters, the AI engineered the underlying mathematical algorithms that actively search for these vulnerabilities.

Claudini Strongly Outperforms a Classical AutoML Method.

By intelligently splicing together previous techniques and writing clever mechanisms to avoid getting stuck during its analysis, the AI made massive strides. It successfully generated novel algorithms that dramatically outperformed human-made baselines, jumping from a success rate of less than ten percent to forty percent on a targeted safety filter.

The most remarkable finding is just how adaptable these new AI-authored algorithms proved to be. When scientists tested these methods on a completely different, highly secured model that the AI had never even encountered, the new algorithms bypassed the defenses with a flawless hundred percent success rate.

LeWorldModel: Stable End-to-End Joint-Embedding Predictive Architecture from Pixels

Maes et al. [Mila & Université de Montréal, New York University, Samsung SAIL, Brown University]

♥ 3.7k LLM World Models

AI needs an internal "world model" to predict the consequences of its actions. Researchers have tried teaching AI to build these models directly from raw video pixels, compressing complex visual scenes into a streamlined imagination space. However, this process faces a frustrating hurdle known as representation collapse.

When asked to predict the future, the AI often takes the lazy route, mapping every image to the exact same uniform representation just to guarantee a perfect prediction score. To prevent this, previous systems relied on highly fragile workarounds.

LeWorldModel Training Pipeline.

This paper developed LeWorldModel, which is a highly stable world model from scratch using just two simple rules. First, the model predicts the next compressed state of its environment based on a given action. Second, a clever mathematical regulator forces these compressed representations to stay continuously diverse, spreading them out to match a natural bell-curve distribution. By enforcing this varied shape, the model is strictly prevented from collapsing into a single, lazy answer.

Pseudo-code for the training procedure of LeWorldModel.

By reducing the complex tuning down to a single parameter, this compact model trains on one standard graphics card in just a few hours. Impressively, it plans up to forty-eight times faster than bulkier alternatives across complex control tasks. Even more fascinating, without explicit physics lessons, the model organically learns to track object locations and registers mathematical surprise when shown impossible events like spontaneous teleportation.

Self-Distillation of Hidden Layers for Self-Supervised Representation Learning

Lowe et al. [Vector Institute, Carleton University, Dalhousie University, University of British Columbia, University of Guelph]

♥ 780 Distillation

Teaching an AI to understand the visual world is complex. For a long time, scientists have had to choose between two extreme training methods, both with frustrating limitations. One approach forces the AI to perfectly reconstruct raw, low-level pixels. While this keeps the system safely grounded in reality, it leaves the AI struggling to grasp big-picture concepts without a lot of extra hand-holding.

Multi-layer self-distillation with Bootleg.

The other approach asks the AI to predict only highly abstract, final-stage ideas. However, because the system is essentially generating its own study material in a continuous loop, it often becomes unstable, loses touch with the actual image, and completely breaks down during training.

To solve this, this research has introduced Bootleg. Instead of making the AI guess only the most basic details or only the most complex final concepts, they tasked it with predicting multiple hidden layers of information all at once. This beautifully mirrors how the human brain processes sights, where early visual processing picks up simple edges and colors, while deeper brain regions recognize complete objects.

By forcing the AI to simultaneously predict early, middle, and late stages of understanding, the system has to compress a wealth of varied knowledge through a tight informational bottleneck. This multi-level approach brilliantly keeps the AI anchored to actual visual features while it masters complex, abstract ideas.

Results for masked self-supervised learning with Bootleg and baselines.

The researchers discovered that this technique creates a dramatically smarter system, outperforming previous methods by significant margins in both recognizing what is in an image and mapping out exact scenes.

Reply