- The AI Timeline

- Posts

- Rotate attention by 90 degrees...? Kimi's New Attention Residuals

Rotate attention by 90 degrees...? Kimi's New Attention Residuals

plus more about V-JEPA 2.1, Mamba 3, and latent planning

Mar 17th ~ Mar 24th

#100 Latest AI Research Explained Simply

🗞️ Industry News in 1 Line

♥ 1.3k Xiaomi has announced the release of MiMo-V2-Pro, Omni, and TTS models. These new models introduce advanced features with global top-tier agent performance, multimodal interaction for seeing and hearing, and expressive voice synthesis. You can test these models on the web or via the API portal.

♥ 1.5k MiniMax has launched its M2.7 model, which uses a recursive self-evolution architecture that contributed to an 88% win-rate over its predecessor. This model achieves state-of-the-art performance in software engineering benchmarks and high-fidelity document editing, while demonstrating enhanced agentic capabilities with 97% skill adherence. Try it today on the web.

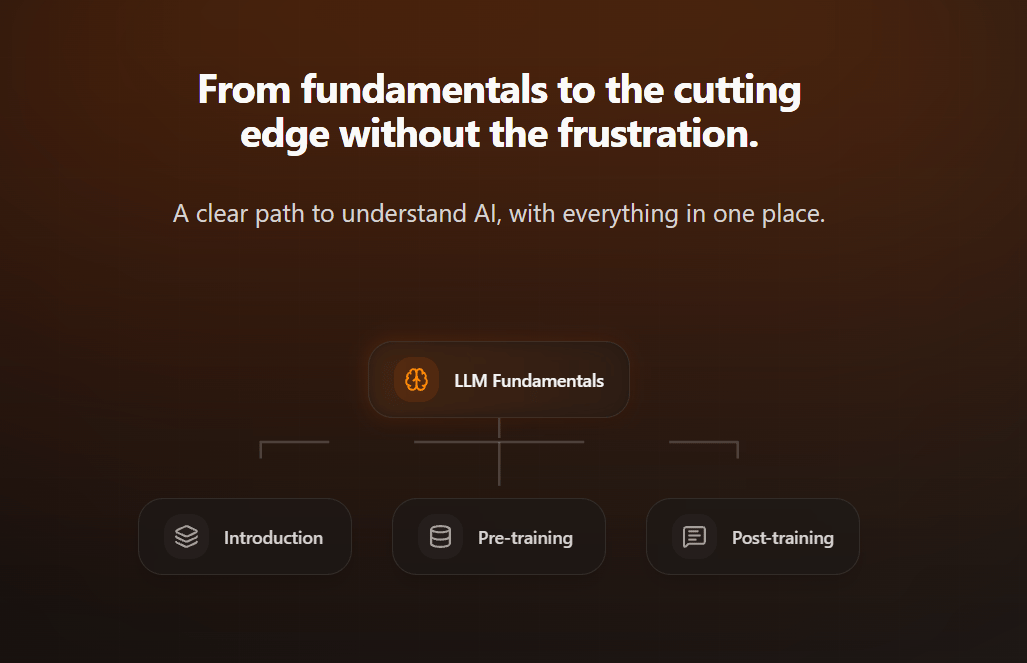

Intuitive AI Academy - NEW Distillation Chapter!

My latest project: Intuitive AI Academy has the perfect starting point for you! We focus on building your intuition to understand LLMs, from transformer components, to post-training logic. All in one place.

We just added a new chapter on Distillaion!

We currently have an early bird offer, where you would get 40% off on the yearly plan for our early users.

Use code: TIMELINE

Attention Residuals

Kimi Team

♥ 15k Attention

LLMs rely on residual connections to pass information through deep stacks of layers. While these connections act as a vital "gradient highway", they work like a blunt instrument. In current architectures, every layer simply adds its output to a uniform sum of all previous layers. This approach causes the information representing the model’s "hidden state" to swell uncontrollably as it moves deeper. Over time, this leads to a dilution effect where early, important information becomes buried and difficult for the model to retrieve effectively, effectively limiting how well the model can leverage its own depth.

Overview of Attention Residuals.

Researchers have introduced "Attention Residuals" (AttnRes) to replace this clumsy, fixed accumulation with a smarter mechanism. AttnRes allows each layer to selectively aggregate information from previous layers using learned, input-dependent weights. Instead of blindly adding everything together, the model now chooses which previous layers are most relevant to its current task.

PyTorch-style pseudo code for Block Attention Residuals.

To ensure this doesn't create excessive memory demands in massive models, the team developed "Block AttnRes," which organizes layers into smaller groups. This allows the model to maintain the benefits of selective, smart aggregation while keeping memory and communication costs efficient.

Experiments on large-scale models, including a 48B-parameter architecture, showed that this method successfully prevents the hidden-state growth that plagued previous models. By creating a more uniform distribution of signals and gradients across all layers, AttnRes consistently improves performance on complex reasoning, math, and coding benchmarks.

Mamba-3: Improved Sequence Modeling using State Space Principles

Lahoti et al. [Carnegie Mellon University, Princeton University, Together AI, Cartesia AI]

♥ 1.5k Mamba bycloud’s pick

Transformer based AI models are incredibly powerful, but they suffer from a "bottleneck" in efficiency. As models grow, the computational effort required to generate each new piece of information, and the memory needed to store that context increases very fast.

This paper has introduced Mamba-3, a new architecture designed with an "inference-first" mindset to solve these efficiency challenges. By revisiting the mathematical foundations of State Space Models (SSMs), the team implemented three core improvements.

First, they developed a more expressive discretization method called "exponential-trapezoidal," which allows the model to handle data dynamics with greater precision than previous versions.

Second, they incorporated a complex-valued state update rule. This acts like a "rotary" mechanism that enables the model to track states, such as solving arithmetic parity tasks, that were previously impossible for similar linear models to master.

Finally, they shifted to a multi-input, multi-output (MIMO) formulation. This clever adjustment allows the model to perform more computation during the memory-heavy decoding phase without increasing the actual size of its state or slowing down its response time.

These refinements allow Mamba-3 to achieve a remarkable balance. At the 1.5B scale, it outperforms top-tier competitors in downstream accuracy while simultaneously matching the language-modeling capabilities of its predecessor at half the state size.

Prefill and Prefill+Decode latency across sequence lengths.

V-JEPA 2.1: Unlocking Dense Features in Video Self-Supervised Learning

Mur-Labadia et al. [FAIR at Meta, Universidad de Zaragoza]

♥ 1.3k JEPA

To be truly useful, an AI needs to be a "jack-of-all-trades": it must understand both the big picture (like identifying a person’s action) and fine-grained local details (like pinpointing the exact edge of a glass for a robot to grasp). Previous models, such as the V-JEPA family, excelled at global understanding but struggled to extract precise local information, resulting in noisy, fragmented visual representations.

V-JEPA 2.1 Architecture

This gap limited their effectiveness in tasks that demand spatial precision, such as depth estimation or delicate robotic manipulation. The team discovered that the "missing link" was in the training objective. Traditional V-JEPA models only practiced predicting what was missing from an image or video, the masked, hidden patches.

V-JEPA 2.1 ViT-G performance across dense and global prediction tasks.

Because the model wasn’t forced to analyze the visible parts of the scene, it essentially learned to treat those visible sections as global summaries rather than detailed spatial maps. To fix this, this paper has introduced a "dense prediction loss," which forces the model to learn from both the hidden and the visible parts of the input.

By supervising every part of the scene, the model is compelled to build a coherent, fine-grained understanding of where objects actually exist in space. They further refined this by applying the learning signal at multiple layers deep within the network, a method called "deep self-supervision", and by using specialized tokenizers that handle images and videos in their native formats.

These changes, combined with scaling the model and the diversity of training data, resulted in a system capable of state-of-the-art performance in both high-level action forecasting and precise, low-level spatial tasks like depth perception.

Temporal Straightening for Latent Planning

Wang et al. [New York University, Brown University, University of Toronto]

♥ 1.2k JEPA

When AI agents interact with complex environments, they typically translate high-dimensional sensory data, like raw video, into a "latent space" to make decisions. For a computer trying to navigate, this means the shortest path between two points in its digital map doesn't actually correspond to the shortest physical path. Because these internal maps are so twisted, gradient-based planners, which rely on finding the smoothest way to reach a goal, often get stuck or perform poorly, forcing researchers to rely on computationally expensive search-based methods.

UMaze: ground-truth geodesic distance. |  UMaze: ResNet-global after straightening. |

This paper introduces a way to "straighten" these maps, helping AI agents see the world more linearly so they can plan their actions more efficiently. The researchers developed a method called "temporal straightening" to fix the distorted geometry of latent spaces.

Latent Curvature and Open-Loop GD Success Rate for Different Encoders. Higher cosine similarity indicates lower curvature.

This is based on a simple idea: as the agent moves, the model tracks its latent trajectory and minimizes the "curvature" by keeping the velocity vectors of consecutive steps as aligned as possible. It forces the AI to learn representations where movement in the latent space feels like travel along a straight, predictable line rather than a chaotic curve.

By adding a straightening objective to the standard training process, the model learns to map observations in a way that Euclidean distance, the simplest way to measure "how far" a goal is, finally matches reality. This has a profound effect on planning: because the path is straighter, the math behind gradient-based optimization becomes much more stable.

In experiments, this approach drastically improved success rates across a variety of navigation and manipulation tasks, allowing agents to reach goals with far greater precision and efficiency without needing the heavy compute power typically required for complex decision-making.

Reply